When the camera count on a live production exceeds ten, the relationship between the LED video wall and the broadcast infrastructure shifts from a technical footnote to a central production challenge. Each camera — positioned at a unique angle, distance, and focal length relative to the LED surface — perceives the wall differently. A camera that sees no moiré from a 45-degree position may show significant beat-frequency artifacts from 90 degrees. A lens set for an 85mm equivalent field of view may capture a perfectly calibrated color temperature while a 24mm wide establishing shot reveals a visible color shift between tile seams at the same calibration settings.

Managing an LED wall in a 20+ camera live production — standard practice for major televised events, award shows, and live-streamed corporate keynotes — requires a production approach that treats the LED surface as an active participant in the broadcast camera system rather than a passive backdrop.

Camera-LED Interaction: The Technical Fundamentals

The fundamental technical challenge at the intersection of LED walls and broadcast cameras is moiré patterning — the visible beat frequency interference pattern produced when the periodic pixel grid of the LED display interacts with the periodic sensor grid of the camera. Moiré severity depends on the relationship between pixel pitch, viewing distance, and camera sensor resolution, varying continuously as camera position and lens focal length change during a live production.

The mitigation strategies available to the production team include: pixel pitch selection (finer pitch reduces moiré risk at typical camera positions — P2.6 and below are generally considered camera-safe for distances above 5 meters); refresh rate maximization (Brompton Tessera-driven systems running at 7,680 Hz virtually eliminate scan-line artifacts in all broadcast capture modes); and camera diffusion (a 1/8 Black Pro-Mist filter on every camera lens reduces moiré visibility by introducing controlled optical diffusion without significantly affecting image sharpness).

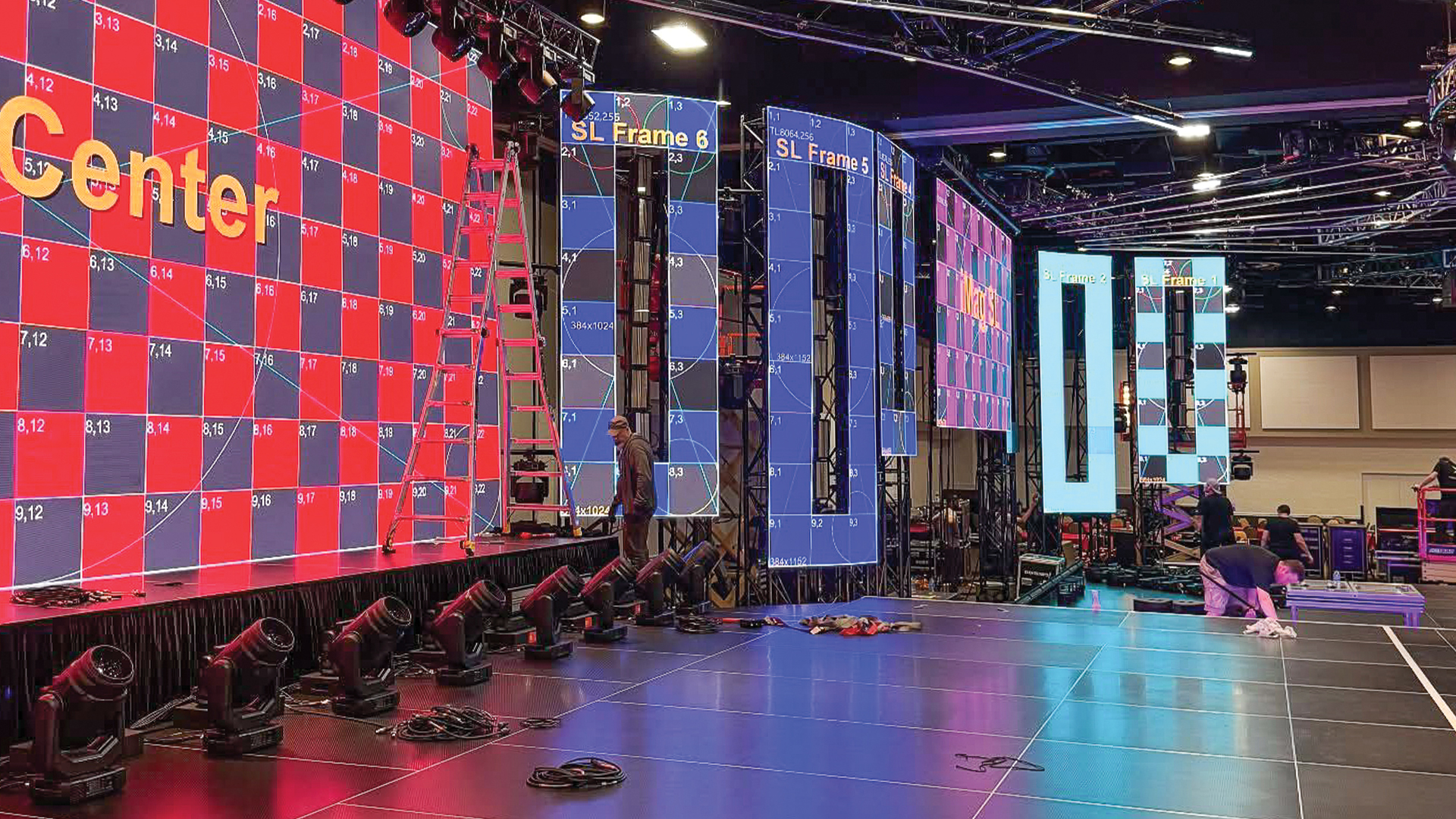

Content Mapping for Multi-Camera Geometry

In a 20+ camera production, the LED wall serves multiple simultaneous functions depending on which camera is currently selected by the broadcast director. A camera at stage right wide position sees the wall as a background environment; a camera at center tight captures it as an intimate frame-filling graphic; a camera mounted on a Technocrane or Studio Arm tracks through multiple perspectives in a single shot, changing its geometric relationship to the wall continuously.

Content designed for the in-room audience experience — formatted for the widest possible viewing angle and maximum visual impact at scale — may not read correctly in tight camera framings. The solution adopted by productions including The BRIT Awards and The Grammy Awards is multi-zone content mapping: the LED wall is divided into virtual zones, each fed with content optimized for the camera position most likely to frame that zone. The disguise media server’s camera feed integration allows content designers to preview every camera’s view of the LED surface in real time during programming, enabling camera-specific optimization that would be impossible without the server’s virtual camera preview capability.

Color Calibration for Broadcast Accuracy

The color accuracy requirements of broadcast LED applications are more demanding than those of live audience viewing. The human eye is a remarkably forgiving device that compensates for color shifts and slight non-uniformity — we see what we expect to see in a familiar environment. A broadcast camera’s sensor has no such adaptive intelligence; it records exactly the photons that hit it, and the resulting image is then viewed by millions of people on calibrated reference monitors in broadcast facilities, 4K HDR televisions at home, and mobile devices with wide color gamut displays.

The broadcast production standard for LED wall color calibration typically specifies a Delta-E uniformity target of 2.0 or better across the full wall surface when measured with a spectroradiometer — a standard that requires not just factory calibration of individual tiles but a complete in-situ calibration of the assembled installation, accounting for the optical effects of the specific frame construction, ventilation patterns, and ambient light environment of the specific show. Brompton’s Hydra measurement system — which uses an automated measurement robot to capture calibration readings from hundreds of points across a wall surface — has become the premium calibration tool for broadcast-critical LED deployments, capable of completing a 100-tile wall calibration in under two hours and achieving measured uniformity exceeding Rec.709 color space compliance.

IMAG Systems: Live Camera Integration With LED

IMAG (Image Magnification) — the practice of feeding live camera output to large screens flanking a main stage — adds a layer of visual complexity when those screens are LED rather than projection. The LED IMAG system must be synchronized to the main stage LED system to prevent visible frame rate artifacts in multi-screen shots, and its color calibration must be matched to the main system sufficiently closely that wide shots incorporating both surfaces read as a unified visual environment.

The standard infrastructure for LED IMAG synchronization uses SMPTE 2059 (Precision Time Protocol over IP) to lock all LED processors — main stage, IMAG left, IMAG right, and any additional distributed displays — to a common time reference, ensuring frame-synchronous operation across the complete display system. The Brompton Tessera SX40 processor, which handles this synchronization natively, is the industry benchmark for multi-processor locked systems, maintaining inter-processor synchronization within 1/4 frame tolerance (approximately 4.2 ms at 60 Hz) across networks of up to 32 synchronized processing units.